|

For years, I advocated for developing inside of VMs or containers. Even wrote a very handy shell tool for managing the process. But a few years ago, I stopped, and just switched to developing on my local machine.

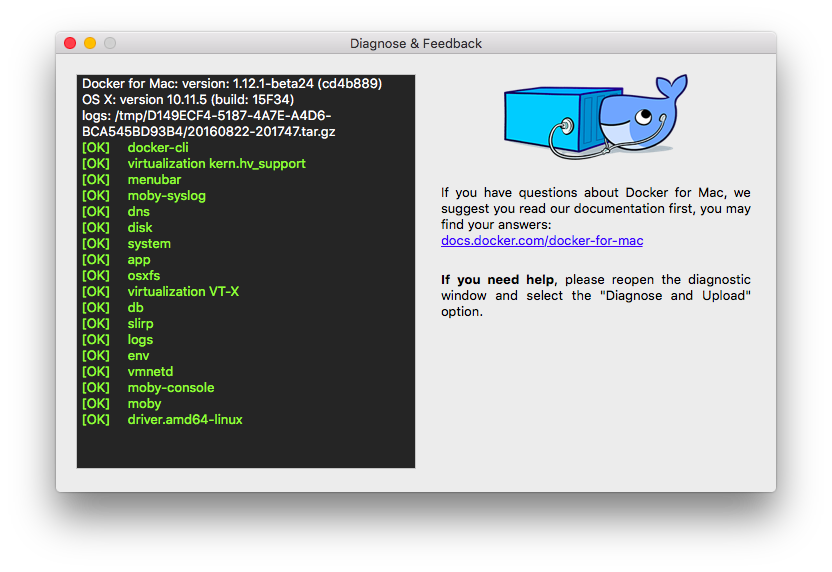

Dec 8, 2015 - After installing the latest version I have faced some issue, so I wanted to switch back to older version of docker 1.6. So I had to uninstall latest.

Working in VMs or containers adds a ton of complexity, for very little benefit. Installing databases is trivial, with any of `brew`, `yum`, or `apt-get`. Your `bin/setup` script can take care of automating that for onboarding new developers.

The same script gets used in your Dockerfile. And your CI system is there to as-perfectly-as-possible replicate production, to run your comprehensive test suite (you do practice TDD, right?) and catch things like 'forgot to add a library dependency to the setup script' and 'app broke because of a library version difference'. Since switching to local-only development, plus containers and CI/CD, my life has gotten a lot nicer.

You'll never get the same environment locally as you do in prod. That's why you have staging (you do have staging, right?).

It's better to make the environment as close as possible to prod without adding massive inconvenience but docker both adds inconvenience and forces you to use it in production (which entails a whole other set of headaches) if you want close environmental parity, and even then, since it's lightweight virtualization there are hundreds of things which can behave differently between dev and prod. IMHO you either want very close environmental parity (in which case full virtualization is the way to go) or you don't, in which case running locally is fine. It's likely impossible to make volumes shared from the host to the container fast for all use cases. It's also likely impossible to make them work as expected on Windows where the host volume is NTFS and the container is Linux. So don't use shared volumes for code. At Convox we offer a Docker development environment in the 'convox start' command.

We manage syncing code into the container by watching the host file system and periodically doing a 'docker cp' to get source changes into the container. It works great and shows the power of the Docker API for taking control of your containers. A bit more info is available here. Are you referring to this?

. Docker for Bioconductor Docker containers for Bioconductor allows software to be packaged into containers: self-contained environments that contain everything needed to run the software. Containers can be run anywhere (containers run in modern Linux kernels, but can be run on Windows and Mac as well using a virtual machine called. Containers can also be deployed in the cloud using or other cloud providers. With Bioconductor containers, we hope to enhance. reproducibility: If you run some code in a container today, you can run it again in the same container (with the same ) years later and know that nothing in the container has changed. You should always take note of the tag you used if you think you might want to reproduce some work later.

ease of use: With one command, you can be running the latest release or devel Bioconductor. No need to worry about whether packages and system dependencies are installed. convenience: Sometimes you just want a fresh R with no packages installed, in order to test something; or you typically don’t have microarray packages installed but suddenly you need to do a microarray analysis. Containers make this easy. Our aim is to provide up-to-date containers for the current release and devel versions of Bioconductor, and some older versions. Bioconductor’s Docker images are stored in; the source Dockerfiles are in.

Our release images are based on and built when a Biocondcutor Release occurs. Our devel images are based on and built weekly with the latest versions of R and Bioconductor packages.

For each supported version of Bioconductor, we provide several images:. base2: Contains R, RStudio, and a single Bioconductor package ( BiocManager, providing the install function for installing additional packages).

Also contains many system dependencies for Bioconductor packages. Useful when you want a relatively blank slate for testing purposes. R is accessible via the command line or via RStudio Server. core2: Built on base2, so it contains everything in base2, plus a selection of core Bioconductor packages. protmetcore2: Built on core2, so it contains everything in core2, plus a selection of core Bioconductor packages recommended for proteomic and metabolomics analysis. metabolomics2: everything in protmetcore2, plus select packages from the biocView. Current Containers Maintained by the Bioconductor Core Team: [email protected].

Maintained by Steffen Neumann: [email protected] Maintained as part of the “PhenoMeNal, funded by Horizon2020 grant 654241”. Maintained by Laurent Gatto: [email protected]. Legacy Containers The following containers are legacy and no longer updated. They have been kept to retain previous versions available via tags:. bioconductor/develbase. bioconductor/develcore. bioconductor/develflow.

bioconductor/develmicroarray. bioconductor/develproteomics. bioconductor/develsequencing. bioconductor/develmetabolomics. bioconductor/releasebase. bioconductor/releasecore.

bioconductor/releaseflow. bioconductor/releasemicroarray. bioconductor/releaseproteomics. bioconductor/releasesequencing. bioconductor/releasemetabolomics Using the containers The following examples use the bioconductor/develbase2 container. Note that you may need to prepend sudo to all docker commands. Prerequisites: On Linux, you need Docker and on or you need Docker Toolbox installed and running.

To run RStudio Server: docker run -p 8787:8787 bioconductor/develbase2 You can then open a web browser pointing to your docker host on port 8787. If you’re on Linux and using default settings, the docker host is 127.0.0.1 (or localhost, so the full URL to RStudio would be If you are on Mac or Windows and running Docker Toolbox, you can determine the docker host with the docker-machine ip default command. Log in to RStudio with the username rstudio and password rstudio.

If you want to run RStudio as a user on your host machine, in order to read/write files in a host directory, please. To run R from the command line: docker run -ti bioconductor/develbase2 R To open a Bash shell on the container: docker run -ti bioconductor/develbase2 bash Note: The docker run command is very powerful and versatile. For full documentation, type docker run -help or visit the.

Modifying the images There are two ways to modify these images:. Making changes in a running container and then committing them using the docker commit command. Using a Dockerfile to declare the changes you want to make.

The second way is the recommended way. Both ways are. List of packages installed on the core2 container These packages, plus their dependencies, are installed:.

BiocManager. OrganismDbi. ExperimentHub.

Biobase. BiocParallel. biomaRt. Biostrings. BSgenome. ShortRead.

IRanges. GenomicRanges. GenomicAlignment.

GenomicFeatures. SummarizedExperiment. VariantAnnotation. DelayedArray. GSEABase. Gviz.

graph. RBGL. Rgraphviz. rmarkdown. httr. knitr. BiocStyle Acknowledgements Thanks to the project for providing the R/RStudio Server containers upon which ours are based.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Blog

- Nvidia shield tv app repo

- Explain basic programming language

- Fresh prince of bel air season 1 online

- Ezdrummer 2 free

- Fifa manager 11 black screen

- Astroneer steam price

- Siemens cnc simulator

- Buildwin media player driver

- Auto tune efx 3 crack free download mac

- Tube bending die

- Nvivo 11 tutorial

- Samsung portable ssd teardown

- Toyota fortuner 2016 philippine price

- Order of the stick fanart

- Top gun hard lock xbox 360 walkthrough

- How much is a grey goose bottle

- Final burn alpha roms super breakout

- Acad filemaker pro 10 adv

- Imazing 2-0-1

- Sniper ghost warrior 3 coop

- Bacaan doa selepas solat jenazah

- Install superior drummer 3 library

- Philips norelco oneblade

- Directtv extra movies pack

RSS Feed

RSS Feed